What the new Bing report reveals about AI search visibility (and why it matches the social shift)

Microsoft launched the Bing AI Performance Report in February 2026. It's the first time most publishers can see whether AI tools are citing their content. What the data shows reinforces what social platforms already told us about how discovery works now.

Introduction

In February, Microsoft launched a report inside Bing Webmaster Tools that shows publishers which prompts cited their content in Copilot answers. For most brands, it's the first time this data has been visible at all.

For years, AI search visibility has been guesswork. You could prompt an AI tool yourself and see whether your site came up. You could read industry coverage about how generative search was reshaping discovery. What you couldn't do was open a dashboard and see, at scale, whether your content was being cited and for which prompts. The Bing AI Performance Report is the first first-party answer to that question.

This piece picks up where Keyword stuffing on social media is following a similar arc as early SEO left off. That piece argued that social platforms have moved to semantic content understanding, the way search engines did a decade earlier. AI search has been working semantically from day one. That's how large language models read content. What's new is measurement. The Bing report makes AI citation visibility a metric, not a hunch.

What the report actually shows

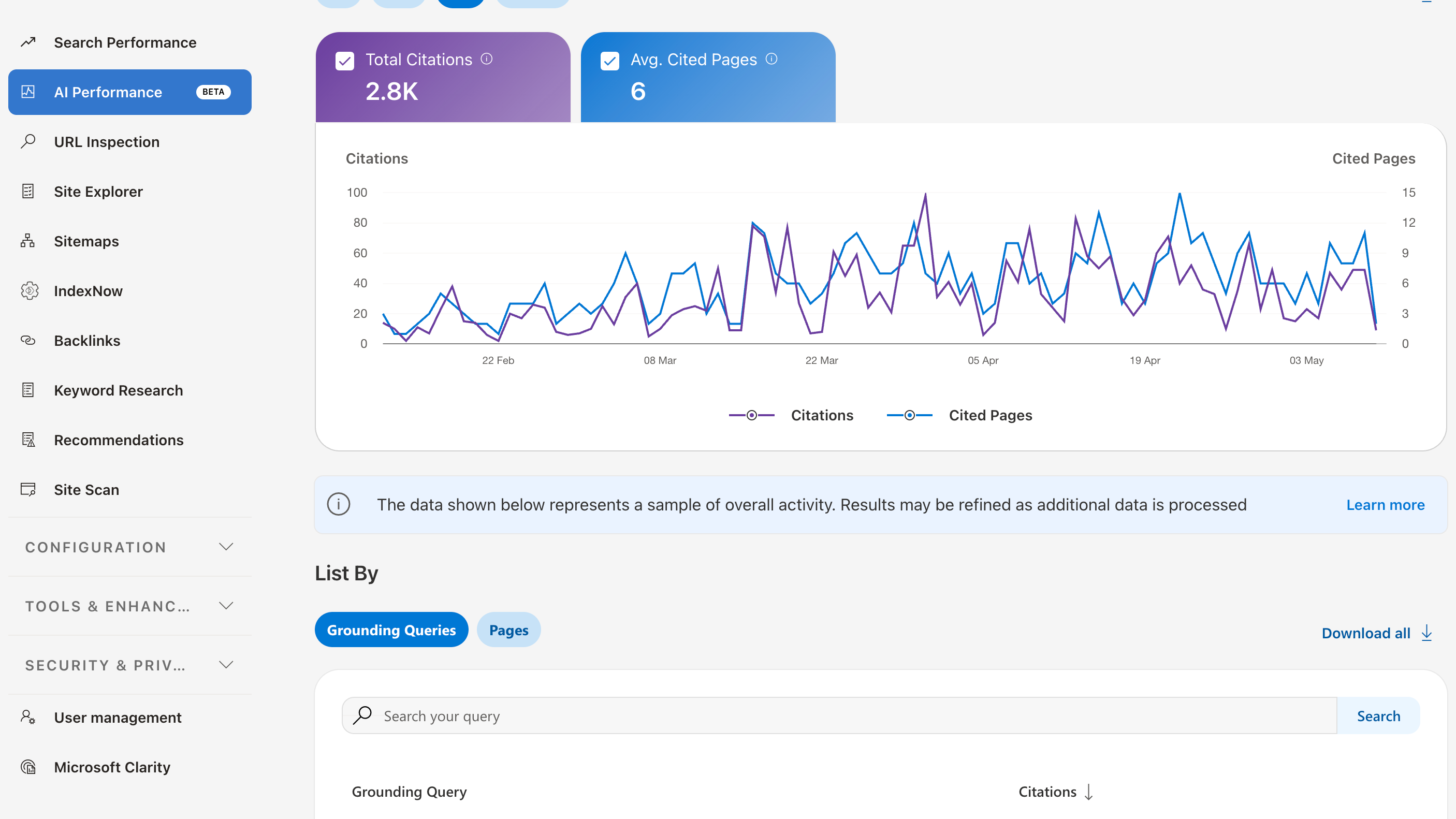

The AI Performance Report covers Microsoft Copilot, AI-generated summaries in Bing search, and select partner integrations. The dashboard displays:

- Total citations: citations from your site that appear as sources in AI-generated answers, for the selected date range.

- Avg. cited pages: the average number of unique pages cited per day during the selected date range.

- Grounding queries: the phrases the AI used to retrieve and cite content

- Page-level citation activity: counts of citations per URL

- Visibility trends over time: a timeline view of citation patterns

Of these, grounding queries is the most useful field for content strategy. It's not the prompt the user typed. It's the rephrased query the AI generated internally to find a source. That's a direct signal about how the system reads and understands your content's topic.

Why this matters now, not before

AI search hasn't changed direction. Large language models have been reading content semantically since they were first deployed in search. They process page content, structure, and entity signals to determine relevance. The behaviour has been consistent.

What changed is visibility into the behaviour. Publishers can now answer questions they couldn't answer before:

- Is our content actually being cited in AI answers, and how often?

- Which pages are AI tools pulling from?

- What language does the AI use to find us?

Before February, those questions had no clean answer. The Bing report converts hunches into a dashboard. The data isn't yet available via API, and the report is in public preview, so coverage will continue to expand through 2026.

The convergence with social platforms

The April Addy piece showed that social platforms have moved to direct content understanding. Instagram reads images and audio. TikTok scans video frames and transcribes speech. Captions and on-screen text are now ranking inputs while hashtags have been demoted. That was the same pattern that defined search engines a decade ago, compressed into a couple of years.

Reading the Bing data alongside the social shift makes the convergence point sharp. Every discovery surface now reads content directly. The signals differ across platforms (caption transcripts on TikTok, schema and structured data for AI search, in-page heading hierarchy for traditional Google) but the underlying model lines up. Find the credible source for the topic. Surface it.

The work to be cited by AI looks substantially like the work to rank in Google, which looks substantially like the work to be discovered on TikTok. Substantive content on a topic the publisher knows well. Clear structure that systems can read, with credibility signals from beyond the page itself.

Patterns worth watching in the data

Three questions to ask of the citation report when looking at it for the first time:

- Are the grounding queries the language your customers actually use, or a generic version of it? If the AI is finding you through "what is X" queries when your customers ask "best X for Y", there's a content gap worth filling.

- Do the cited pages match the ones you'd want cited? Thin overview pages getting cited more than deep expert pages is a structural signal worth acting on.

- Is visibility trending up or down across new prompts? Citation count over time is more useful than a single snapshot.

What's still missing

The current report shows citation count, not click-through. You can see that you've been cited, but not whether anyone visited as a result. Microsoft has acknowledged this and noted that conversion measurement is shifting because AI answers resolve user intent on-page rather than driving onward clicks.

Google Search Console has had similar limitations with AI Overviews and AI Mode visibility. The reports show coverage without full click attribution. SparkToro and Datos data showed that AI Overviews reduce organic click-through rate by approximately 70%. The visibility is there. The click isn't always.

For brands measuring marketing impact, this means AI citation tracking belongs alongside other top-of-funnel discovery metrics, not bottom-of-funnel attribution. Brand mention frequency, content recall, and citation count are leading indicators. Direct revenue attribution from AI citations is still difficult.

Sits alongside SEO. Now also alongside social.

The work hasn't changed. It's mostly the work that was already on the list. Page Experience, Helpful Content, accessibility, structured data, off-site authority.

What's new is that for the first time across two surfaces in the same year (AI search via Bing, social via Instagram and TikTok's new ranking signals), the measurement is catching up to the discipline.

For brands wondering where to spend on visibility in 2026, the more useful framing is: there is one audit. It covers the surfaces customers now use. The fundamentals carry across.

Closing notes

AI citations were guesswork until February. Now they're data. The data confirms what social already showed: substantive content travels.

Sources

- Bing AI Performance Report announcement, Microsoft Bing Blog, February 2026

- Keyword stuffing on social media is following a similar arc as early SEO, Addy LinkedIn, April 2026

- Experiential Search for more helpful digital experiences, HGDR Consulting blog

- Instagram algorithms and ranking signals, Instagram for Creators

- TikTok recommendation system and content understanding, TikTok Transparency

- Google search +21.64% in 2024 and AI Overviews CTR impact, SparkToro/Datos, January 2025